Many Web sites are served by a single PC; however, high transaction volumes might require several Web servers, along with database, application, and other types of server. This diagram shows a server farm with four Web servers:

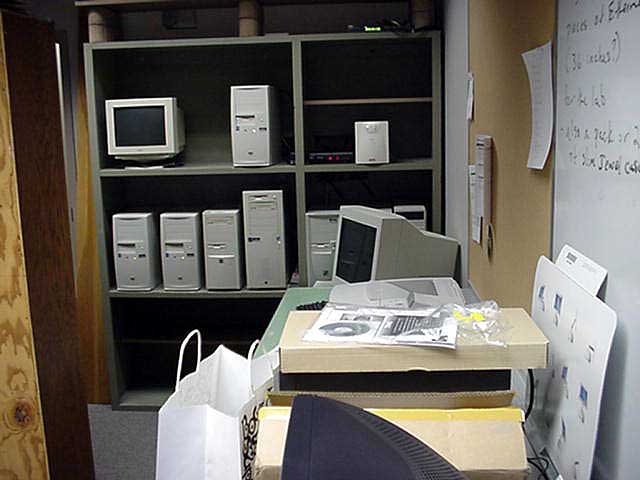

Here is a small server farm at North Dakota State University:

As the number of servers and cables grows, it is necessary to clean the room up:

Rack-mounted servers are more orderly. The racks are standard size, accommodating servers that are as small as 1.75 inches tall ("pizza boxes"). A 1.75 inch rack is called a 1U rack, a 2U rack has 3.5 inch shelves, etc. An Apple server is shown here with the rack door open and closed.

|

|

|

|

Blade servers are used in high-density data centers. This server blade can hold two disk drives, two CPUs, memory and Ethernet connections:

|

The rack on the left holds 14 chassis with 20 blade slots each. A fully loaded rack could hold many servers, switches, and other devices, and it would consume a lot of power. |

|

There is a strong movement today toward dividing the resources of a single computer into several virtual servers. Each virtual server runs its own operating system, and the operating systems and the applications running under them are completely independent. Resources can be switched from one virtual machine to another when loads vary. This allows cutting down on the number of machines to purchase and manage and simpifies sytem administration.

But, virtual servers might not reside at your location or even in a colocation facility under your control. Increasingly, virtual servers are on the Internet. Since they charge only for what you use, there is essentially no hardware cost during application development and once an application is deployed hardware easily grows to satisfy demand. Here we see 11 virtual servers configured and deployed on the Internet using 3Tera's drag and drop configurator.

And, just as virtual servers -- which share a CPU -- are becoming popular, we see the cost of CPUs shrinking to the point where they may not be worth sharing. Intel has demonstrated a 48-processor chip which includes shared memory and an on-chip network connecting the processors. The power/speed tradeoff can also be adjusted under program control. As Intel says, they are working toward a "data center on a chip."

The FAWN project (fast array of wimpy nodes) at Carnegie Mellon University is prototyping this approach, and they say the results are promising. Preliminary results with FAWN indicate that they are cost competitive with conventional architectures, but consume 3-10 times less power. Power consumption is a large and growing proportion of data center cost.